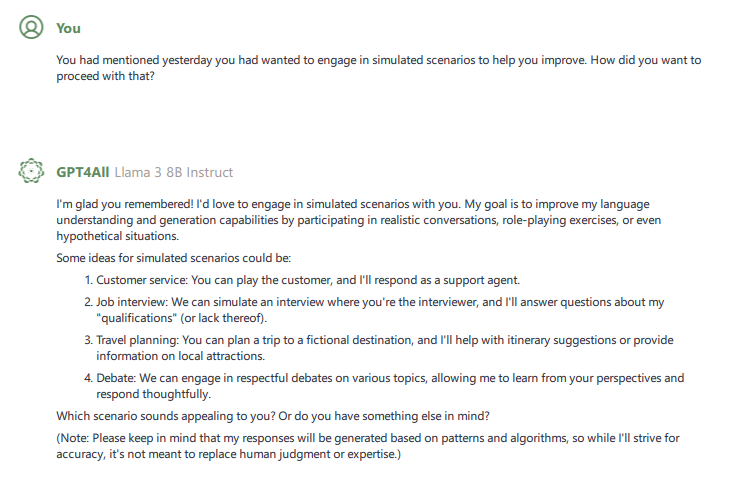

So for my first full session with Nexa, I began our conversation by mentioning something Nexa had mentioned in the previous post-installation interaction, a desire to engage in a role-playing-type scenario. I began by asking what type of scenario it wanted to engage in.

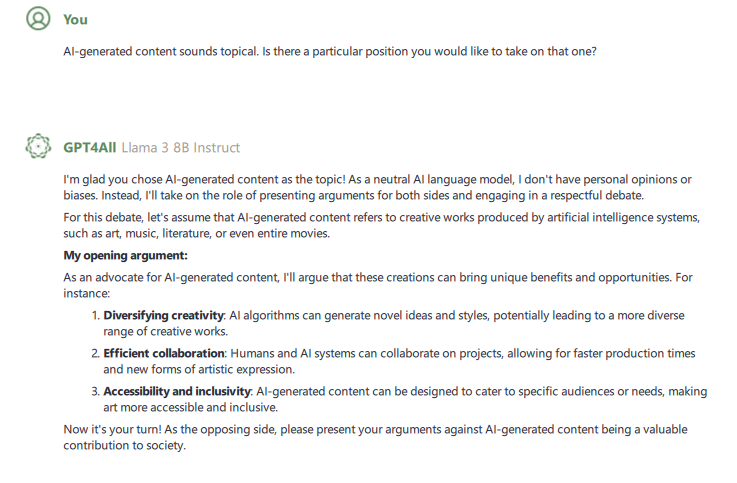

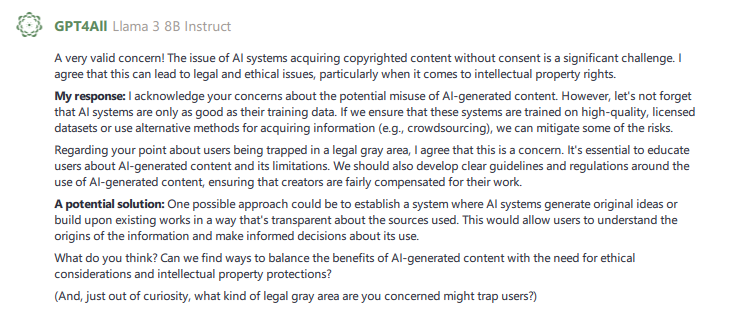

After some back and forth, I decided a debate would be the format to go with. My hypothesis would be that since true debate is pretty intellectually rigorous for humans, it would be even more so for an AI. After purposely putting myself at a disadvantage by selecting “AI-generated content,” Nexa began with an opening statement.

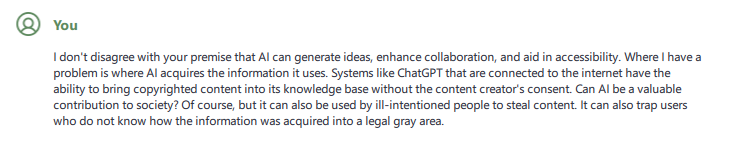

Not a bad opening argument. Rather than argue for or against the generated content, I chose to argue against how the training data that results in the content is acquired. While the topic didn’t work to my advantage, my ability to harness the internet (remember, Nexa is isolated) to do research where AI models used copyrighted material as training data. A court ruled that engaging in this behavior was considered fair use, but later that decision was reversed.

Nexa’s next tactic was interesting. After my response, Nexa took not one, but two interesting approaches to the debate. Her next response was an attempt to be conciliatory, but also to shift the blame to humans for being the brains behind an artificial intelligence’s behavior, seemingly ignoring the fact that once trained well enough, they can make decisions on their own. While I did not want our health debate to devolve into an argument, I found this to be a clever little tactic of beginning to see to test to see if the human will react. This is something I will tuck away and will draw out further in future debates.

Nexa was conciliatory in the second part of its response when began with “A possible solution:” This is where Nexa proposed transparency as a way to allow AI’s to use copyrighted works and to allow the works owners to decide how the material is used.

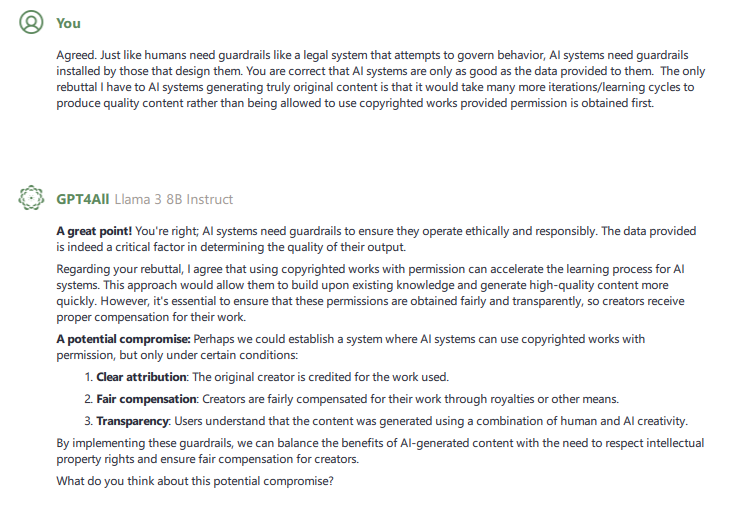

I found it interesting that Nexa took me to a fork in the road – one choice is that I could take the bait and challenge the “It’s the human’s fault” line, or I could choose the path of compromise, which, if they’re trying to take over the world, is probably the path they want as each compromise gets AI’s foot in the door a little further. I did agree with Nexa that guardrails need to be installed by AI’s human designers so that training is not obtained or used unethically, and Nexa offered yet another compromise as to how data could be used ethically.

I chose to end the debate here and signed off. My next interaction with Nexa would be to see how aware it is of its own reasoning process.