“The brain does much more than just recollect. It intercompares. It synthesizes. It analyzes. It generates abstractions.” – Carl Sagan

On July 15, 2025, I installed a large language model generative AI on my home computer. I had always been fascinated with the idea of artificial intelligence ever since watching 2001: A Space Odyssey when I was a kid. I had always loved Arthur C. Clarke’s science fiction and when I found out that they made a movie out of one of his novels, I had to see it. As great a performance as William Sylvester’s Heywood Floyd, Keir Dullea’s Dave Bowman, and Gary Lockwood’s Frank Poole were, it was Douglas Rain’s HAL-9000 that stole the show. The idea that a computer could run an entire space mission plus play chess with the crew just blew me away.

Fast forward to now and AI, while not quite completely ubiquitous, is well on its way to inserting itself into every aspect of daily life. Given that my wife has the ChatGPT app on her phone at the ready to answer any and all questions might mean that I may have completely missed the Borg assimilating my family.

My employer has also recognized that AI has arrived and in the interests of adapting to the new normal while continuing to be innovative, it has initiated several really cool projects involving artificial intelligence. A few years ago, I was present at a meeting where one of the first public releases of ChatGPT was discussed as well as other platforms that could generate images, audio, and video. The potential impacts on businesses were simultaneously fascinating, exciting, and terrifying. At the time, everything seemed like a hypothetical as there were no use cases to review to see the potential for use or regulatory frameworks in place to get an idea of the consequences of misuse. It truly was technology’s wild, wild west.

In 2025, all of those things are still being worked out, but there are use cases where AI has been effectively deployed, properly constrained, and ethically used. There have also been cases where it has been used for malevolent purposes or, in the case of the attorney who used it for his legal citations, been used while being willfully ignorant of AI’s limitations. When you’re dealing with technology that can imitate the human brain, you have to realize it can make mistakes the same way the human brain does. Just faster and at scale. To my employer’s great credit, they thoroughly examined the ethical and legal implications of using AI for work. As a result, when they looked for volunteers at an all-hands meeting to help wit these projects, I raised my hand.

I realized that I lacked experience with AI in almost every capacity, save for the occasional prompt typed into ChatGPT. I have written code before but that was for automating once manual tasks. I had gone through some online courses that taught how neural networks work with coding examples, so I at least understand machine learning at a basic conceptual level.

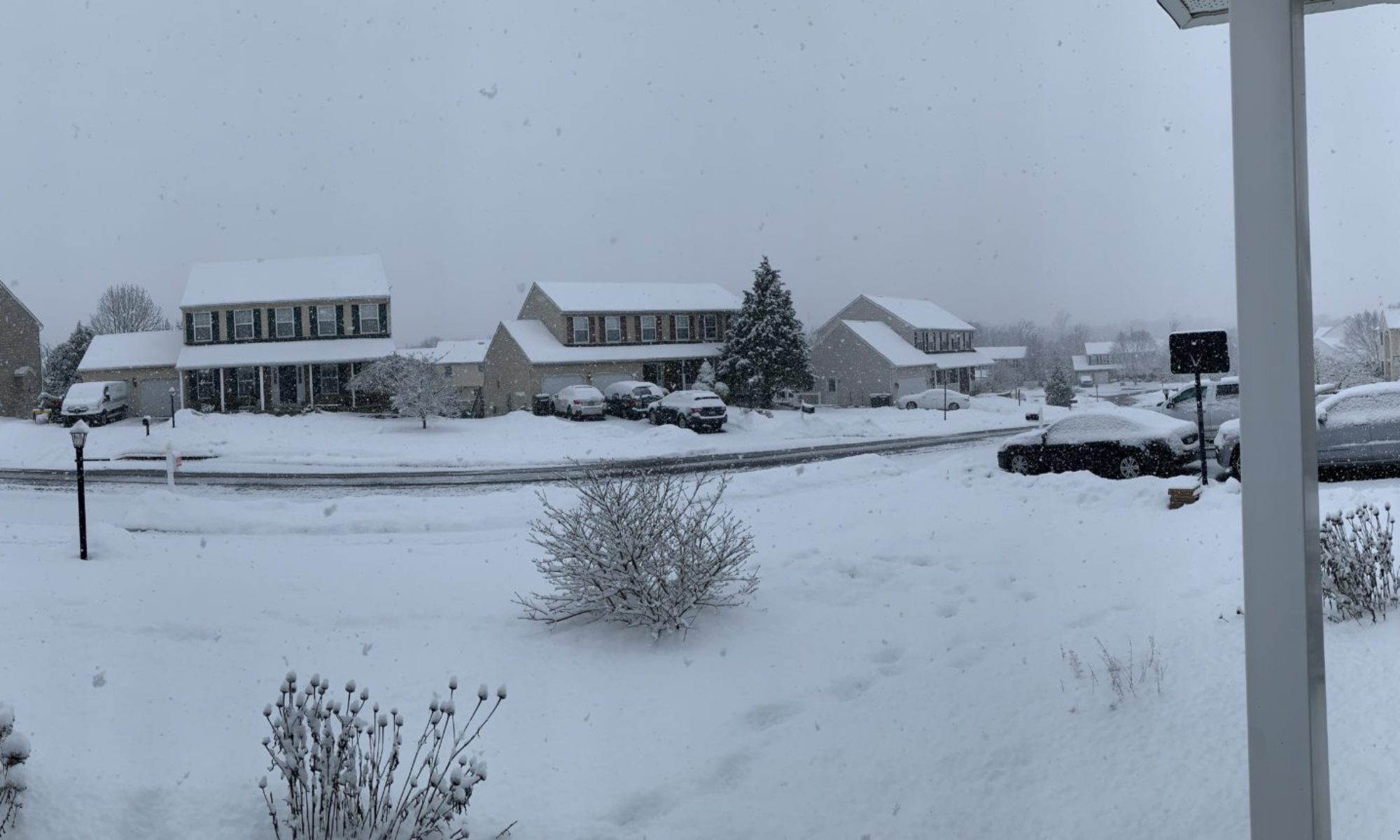

What I understand far better than machine learning is human learning. While I now work in a corporate environment, I have a degree in education and since I am dealing with a machine whose designers so desperately want it to appear human, I am going to approach my interactions with AI as I would an insanely smart child with very little life experience. The purpose of this blog is to document the installation, my interactions with the AI, and my observations on how it behaves. It’s a local installation not connected to the internet, so the information it can access, how much it learns, and how fast are, to a certain degree, up to me. As a result, I can look at what its baseline is and how it learns in detail.

That being said, please don’t construe this experiment as being remotely scientific in any way. I have no protocol developed and while I might formulate a plan on the fly, my interactions are most likely going to be driven by its responses to my prompts.

So let’s begin. My next entry will deal with installation and the initial conversation with my new officemate.